Since the Fediverse stuff is catching on, I decided to upgrade the deployment. New name, Kubernetes deployment, encryption, the whole thing. Also moving my account across instances went pretty well.

Why are you doing this?

I had previously done a shoddy deployment of mastodon which was running fine, but I didn’t like a few thing. It didn’t make sense to invest in something that I might be abandoning immediately

- The branding being closely tied to Brownfield.dev

- Excessively long domain name

- Hastily deployed Minio

The new deployment will fix these problems, possibly leaving more in their wake.

What’s deployed where?

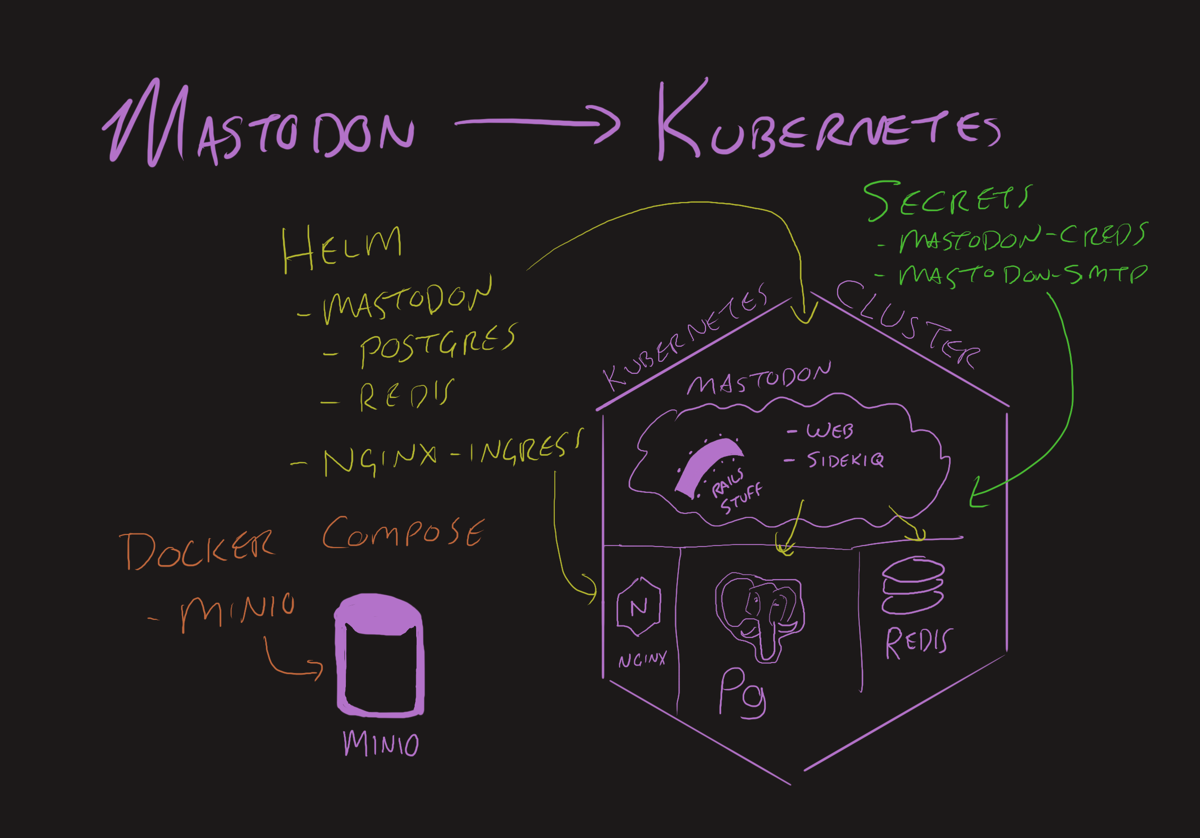

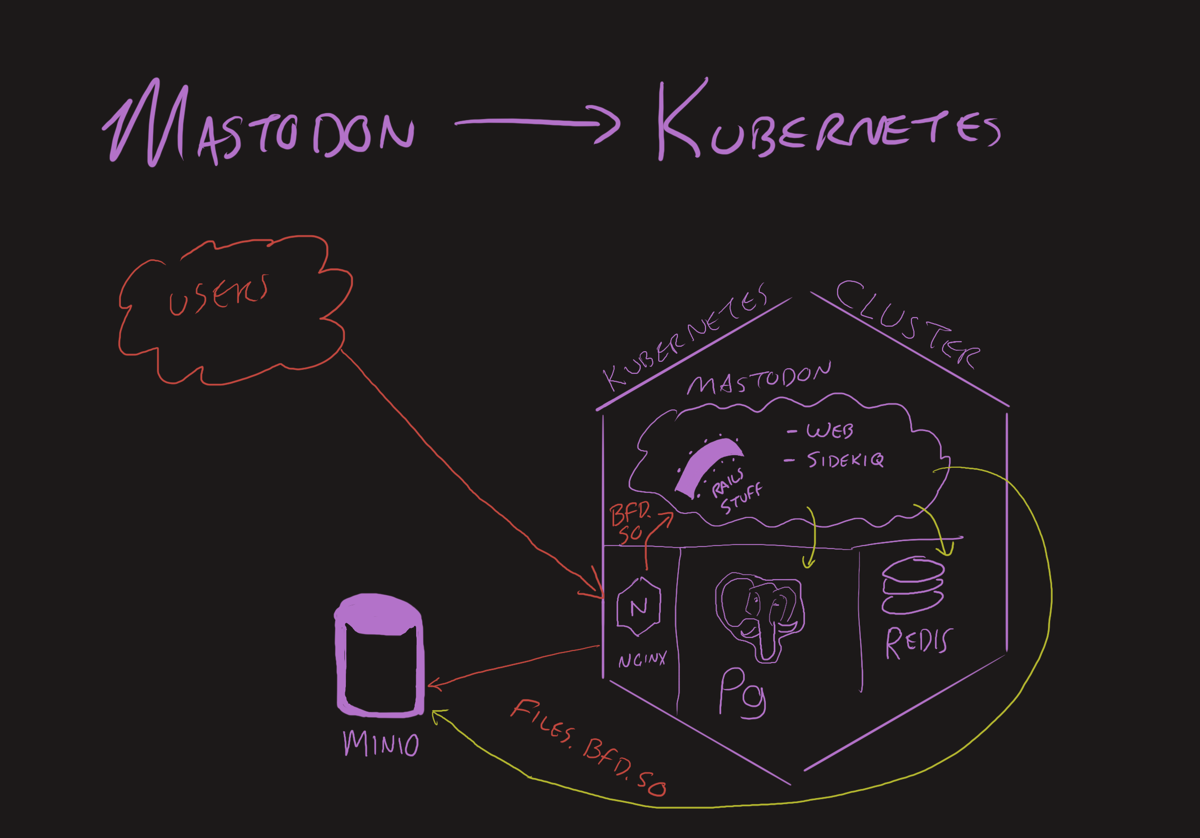

In the new setup, we have 2 platforms running things, storage host and Kubernetes cluster. The storage host runs the object storage only. The Kubernetes host runs everything else.

User traffic hits Nginx Ingress, the "files" subdomain goes to storage host, "bfd.so" traffic hits Mastodon

For information on the Minio deployment and how Nginx points to an external service, check out the prior story.

Helm chart values

The Mastodon Helm chart is pretty well formatted and didn’t have surprising behaviors.

The Mastodon app stuff is all in the Templates directory like normal. Additional software like Redis and Postgres were in sub charts. Values for these things are sectioned nicely in the values file. Defaults are reasonable!

Customizations

If you look at the chart, it creates a secret full of passwords and manages them for you from Helm. If you use GitOps and don’t want your passowrds in the repo, you can do a crazy shenanigan line like this.

$ export $(docker run --rm tootsuite/mastodon bundle exec rake mastodon:webpush:generate_vapid_key | tr '\n' ' ' )

$ kubectl -n mastodon-bfd create secret generic mastodon-creds \

--from-literal=redis-password=[redis password] \

--from-literal=password=[postgres password] \

--from-literal=postgres-password=[postgres password] \

--from-literal=AWS_ACCESS_KEY_ID=[objectstorageid] \

--from-literal=AWS_SECRET_ACCESS_KEY=[objectstoagesecrer] \

--from-literal=SECRET_KEY_BASE=$(docker run --rm tootsuite/mastodon bundle exec rake secret) \

--from-literal=OTP_SECRET=$(docker run --rm tootsuite/mastodon bundle exec rake secret) \

--from-literal=VAPID_PRIVATE_KEY=$VAPID_PRIVATE_KEY \

--from-literal=VAPID_PUBLIC_KEY=$VAPID_PUBLIC_KEY

$ unset $VAPID_PRIVATE_KEY

$ unset $VAPID_PUBLIC_KEY

Both Postgres and SMTP look at the password key so we need a separate secret for SMTP.

$ kubectl -n mastodon-bfd create secret generic mastodon-smtp \

--from-literal=login=[smtp login] \

--from-literal=password=[smtp password]

Since some of these secrets were generated directly into the kubectl command it’s probably worth taking a backup of the k8s secrets immediately. Throw it in 1Password or something secure.

mastodon:

s3:

enabled: true

existingSecret: "mastodon-creds"

secrets:

existingSecret: "mastodon-creds"

smtp:

existingSecret: "mastodon-smtp"

postgresql:

enabled: true

auth:

database: mastodon_production

username: mastodon

existingSecret: "mastodon-creds"

redis:

enabled: true

hostname: ""

port: 6379

auth:

existingSecret: "mastodon-creds"

replica:

replicaCount: 1

After applying these changes, the migration pod wouldn’t start because it was missing secrets.

I had to manually create a couple of secrets for {name}-postgres and {name}-redis to get the migration to finish properly.

Redis overkill replicas

Because my cluster is small, I turned the Redis replicas down to 0 and just run on the. The replicaCount: 1 in the code block above handles this.

Object storage

In addition to the credentials stored in the secret above, we have to make sure our mastodon pods can talk to the Minio server.

To do this, set the mastodon.s3.endpoint and `mastodon.s3.hostname.

Also, we need to tell browsers how to read the files.

mastodon:

s3:

enabled: true

existingSecret: "mastodon-creds"

bucket: "bfd-so"

endpoint: "https://files.bfd.so"

hostname: "files.bfd.so"

region: "nh"

alias_host: "files.bfd.so/bfd-so"

If you skip the endpoint or hostname, it’ll default to AWS endpoints.

Email alerts

The email setup worked fine except I forgot to add the alias to my mailbox so it was rejecting traffic.

The logs can be found with kubectl logs -n mastodon-bfd deployment/mastodon-bfd-sidekiq-all-queues.

Adding --follow will tail the log as it is written so you can trigger the email event and watch for the error logs.

mastodon:

smtp:

from_address: notifications@bfd.so

port: 587

reply_to: notifications@bfd.so

server: smtp.mail.me.com

tls: false

existingSecret: mastodon-smtp

Ingress

Just needed my hostname, ingress class, and cluster issue.

ingress:

enabled: true

annotations:

cert-manager.io/cluster-issuer: "external"

acme.cert-manager.io/http01-edit-in-place: "true"

nginx.ingress.kubernetes.io/proxy-body-size: 40m

nginx.org/client-max-body-size: 40m

ingressClassName: external

hosts:

- host: bfd.so

paths:

- path: '/'

tls:

- secretName: bfd-so-tls

hosts:

- bfd.so

Deployment time

Now that we have one or more values.yaml files and the secrets created, let’s install the thing!

git clone https://github.com/mastodon/chart mastodon-chart

cd mastodon-chart

helm upgrade -i -n mastodon-bfd mastodon-bfd ./ -f ~/bfd-so-values.yaml

Since it has to start a bunch of stuff and bootstrap, it can take a few minutes.

WTF, Elastic!?

For no discernible reason, the Elastic pods wouldn’t come up healthy. Could be that my cluster didn’t have enough nodes or something. I decided to forego it for now.

elasticsearch:

enabled: false

It’s not clear how the lack of elastic search actually impacts the server. The client doesn’t seem to have any issues with doing regular stuff.

Administratorinating the instance

The least Kubernetes-like (and most rails-like) thing about this is the command line usage.

kubectl exec -it -n mastodon-bfd deploy/mastodon-bfd-web -- bash

Then you can do things like make your account an owner.

Migrating @mterhar to the new place

This is the coolest thing I’ve seen in a social media platform. Every one has a massive burden when it comes to trying to go elsewhere. The whole migration process probably took 2 minutes because I was reading about it while I was doing it.

- Create the new account

- In the new account, add an alias by using “transfer from another instance”.

- In the old account, go to settings and use “transfer to another account.”

- Export your follows

- Import your follows

- Add profile picture and banner and bio stuff

- Update

rel="me"link on your website - Keep the old server running for a bit

Enjoy being cooler!!1

If these instructions helped or sucked, let me know at @mterhar@bfd.so. This way, if they sucked so bad you can’t get Mastodon running, I won’t hear about it. Selection bias is at it again!